Today Nvidia launched their much anticipated RTX 3000 series graphics cards. In what’s now becoming the norm, an online streamed event presented the three new graphics cards. The RTX 3070, RTX 3080 and RTX 3090 so let’s take a deeper look because there’s a bunch of really interesting revelations happening here.

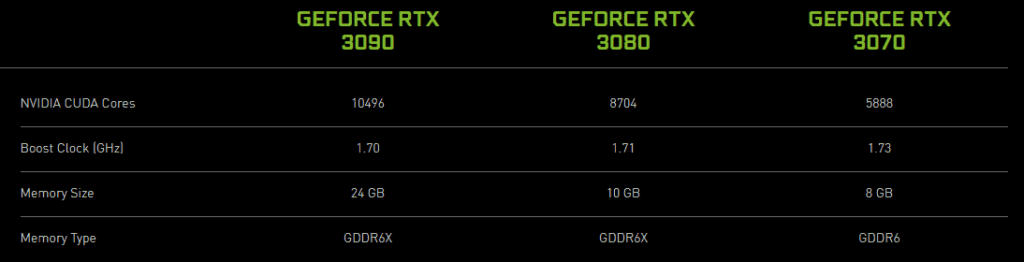

You can check out the full launch in the video above but I’ll save you a bit of watching and just trot out the raw specs below:

Prices are set – for the Founders Edition – at $1,499 USD / $2,429 AUD for the RTX 3090, $700 USD / $1,139 AUD for the RTX 3080 and $499 USD / $809 AUD for the RTX 3070. Yes, you read that right… an eye melting… wallet annihilating $2,429 AUD for a single graphics card. Insane.

A Generational Step

Now obviously these GPU’s are bigger (literally), better and faster than the 2000 series ones as one would expect. However I thought I’d go into a bit more detail with this post as it represents quite a pivotal step from what I’m seeing in the long term trend.

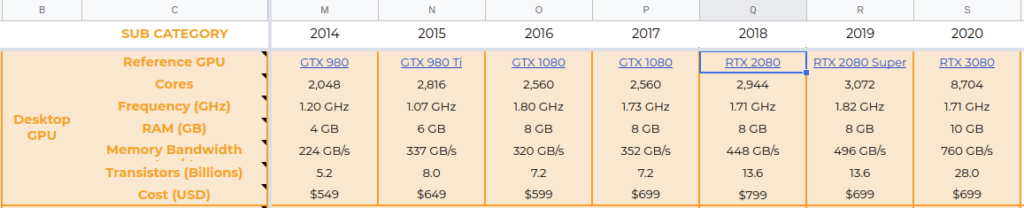

For the past ~5 years now Nvidia and their amazing GPU’s have only marginally improved. As you can see in the cut out from our Technology Forecaster below, this gets shown quite easily if you compare the specs across the same “level” of GPU (the xx80 series) over the years.

From 2015 to 2019 all the most meaningful specs of their graphics cards have been essentially standing still. Sure, there have been improvements, especially with the introduction of the RTX line and the RTX/AI cores. Notably the RTX 2080 was the biggest leap increasing the number of transistors by a big margin (7.2 billion -> 13.6 billion). But at the end of the day we were still seeing steady, modest improvements not huge leaps like a doubling or tripling of specs.

All through out 2015->2019 the CUDA Core count stayed around 2,500-2,900. Now with the new release of the RTX 3080 it has crushed all the past years pounding out a whopping 8,704! A ridiculous 2.8x increase over the last years RTX 2080 Super. That is by far the biggest headlining feature I think. Nvidia deserve a huge pat on the back with this. Seriously, well done to all the engineers and people at Nvidia that tripling of cores is astonishing!

We also see similar trends in the Memory Bandwidth which is critical to performing AI training as the faster your GPU can receive the batches (essentially little groups of data), the faster the entire training session will be. RTX 3080 gives us a 1.5x improvement in this area along with an additional 2GB of memory. We’ve had four long years of 8GB so it’s about damn time Nvidia increased this. Memory size is also another critical component in AI training as it allows for more parameters in your model and thus, more complex and competent AI’s. More memory also helps with higher resolution screens which is nice too.

Finally the other area where we can clearly see that this has been a generational step rather than just a “bump” is in the transistor count. When Nvidia launched the RTX 2080 they made a big deal about how it was basically the biggest consumer chip ever made with 13.6 billion transistors. And they were right! It was a beast!

Now the new RTX 3080 casually comes in and decimates that number with 28 billion transistors on deck. That is a straight out doubling of transistors all for the same price as last year. Who said Moore’s Law was dead?

Dat Price

Speaking of prices, there’s been a lot of complaints recently about Nvidia taking advantage of their near monopoly when it comes to consumer, high end GPU’s. People lament that their prices have sky rocketed higher and higher, and with no competition well… what other choice do you have?

Interestingly though I don’t believe Nvidia are as to blame as you might initially think. My main argument regarding this is that back in 2010 they launched the GTX 480. This was the near top of the line just like the RTX 3080 is now only it sold for $499 USD.

Now if we take that $499 USD amount, add 10 years of inflation at 3%… we get about $670 USD. The RTX 3080 is launching at $699 as stated and to me at least, that seems like a pretty fair deal. I mean sure, it’s not cheap… but when inflation is taken into account you can see they haven’t really increased their prices much at all.

I think what most see as “unfair price hikes” is actually just the fact that we haven’t seen the amazing progress in GPU’s together with price reductions. In the past tech has both improved and gotten cheaper in real terms over time.

Let’s Talk RTX 3090

I can’t finish this piece off without bringing up the technical marvel that is the RTX 3090. This thing is just absurd. The price is absurd, the amount of memory is absurd, the resolutions and frame rate it can drive are absurd and I love it!

It takes up three back plane slots, has 24 GB of GDDR6X memory and – literally – over 9,000 CUDA Cores. Interestingly it’s also the only RTX 3000 series GPU that’s compatible with SLI which seems to be quite the change.

With the insane amount of high speed memory it seems like the 3090 has been made for two main uses, AI and ultra large resolution game play. It can push 8K resolution at 60 frames per second (or more at least on High Settings) and even do HDR. If you’re looking for the balls to the wall, no compromise, no money left in your account experience, this is it.

If you’re an AI researcher or doing some serious rendering/CAD/engineering works it also looks to be the ultimate flex. With 24 GB of memory you’ll be able to train far more complex AI systems and load in more data no matter what you’re running.

While it’s obviously not for everyone at that eye watering price, I appreciate Nvidia giving it everything they’ve got so that we can keep pushing further into the future. The more demand these things get, the more money they get to develop better and better tech for all of us.

The Time To Upgrade

If you’ve been sitting on the fence – especially regarding RTX – waiting to upgrade for a few years now you’re not alone. With the higher perceived prices and frankly, incremental advances shown above there hasn’t really been much of a reason to shell out the $800+ AUD for a “new generation” GPU lately.

For the past 5 years things have been stuck at about 2,500 CUDA Cores, 8GB of memory and not really changing. While the RTX and AI cores Nvidia introduced in the RTX 2080 back in 2018 were a good thing, it’s taken the last 2 years for all the software and games to really mature and roll out.

There are now many, many games that support all the various features of RTX such as DLSS, Ray Tracing, Ambient Occlusion, Shadows, Global Diffuse and Illumination. Plus with the newer hardware AI and RTX cores along with the release of DLSS 2.0, which uses AI to upscale a lower resolution frame into a high resolution frame, you can now get the full RTX effects at high frame rates and at high resolutions too. Gone are the days of turning RTX on with you’re $1,200 AUD GPU and seeing 25 fps.

The time is right for RTX and the hardware has now taken a huge, definitive step up with the RTX 3000 series. It’s time to buy… but which one is the best value for money? Surprisingly it seems to be the more expensive RTX 3080.

| GPU | Price (AUD) | CUDA Cores | Cores / $ AUD |

| RTX 3070 | $809 | 5,888 | 7.3 |

| RTX 3080 | $1,139 | 8,704 | 7.6 |

| RTX 3090 | $2,429 | 10,496 | 4.3 |

Usually the lower end tiers are where the sweet spot, value for dollar is at but because of the super amped up (pun intended) specs of the 3080, it looks like it’s the best option.

So are you going to do a GPU upgrade once these card become available later this month? Which one are you getting? Let us know in the comments!

The benefits include: 1) How to get those silky smooth videos that everyone loves to watch, even if you're new 2) How to fly your drone, from taking off to the most advanced flight modes 3) Clear outlines of how to fly with step-by-step instructional demonstrations and more 4) Why flying indoors often results in new pilots crashing their drone 5) What other great 3rd party apps are out there to get the most out of your drone 6) A huge mistake many pilots make when storing their drone in the car and how to avoid it 7) How to do all of these things whilst flying safely and within your countries laws.